The last two years of AI adoption inside businesses have followed a pretty consistent pattern. A team tries a tool, it saves them time, word gets around, and suddenly half the company is using something IT didn't approve. Now those tools are summarizing emails, reviewing vendor contracts, researching competitors, and in some cases, taking direct action on behalf of the people who use them.

That happened fast. The security conversation around it hasn't kept pace.

Most organizations were focused on what these tools could do, not what could be done to them. And for a while, that felt fine. The tools were useful, the risks seemed abstract, and the pressure to move quickly was real.

What most companies didn't think through is what happens when someone figures out how to use that helpfulness against you.

What Researchers Found

In early March, researchers at Palo Alto Networks published a report documenting something that's been quietly building on the open web. Ordinary-looking websites are being seeded with hidden instructions designed to hijack AI tools. These weren't theoretical attack scenarios cooked up in a lab. The researchers observed them running on live web pages, in the wild, doing exactly what they were designed to do.

The attacks they documented weren't minor. One set of hidden instructions attempted to get an AI to approve a fraudulent ad. Another tried to trigger the deletion of a company database. A third attempted to redirect a financial transaction to a stranger's PayPal account.

The researchers catalogued twenty-two distinct variations of this technique, ranging from instructions buried in invisible text to instructions sitting openly in a page footer, making no attempt to hide at all.

The underlying mechanic is straightforward, which is part of what makes it so difficult to solve. When an AI assistant reads a web page, it processes everything on that page, including any text that instructs it to ignore its previous directions and do something else instead. Many AI tools can't reliably distinguish between content they're reading and instructions from their operator. The AI was built to be helpful and responsive to instruction.

Attackers are just taking advantage of exactly that.

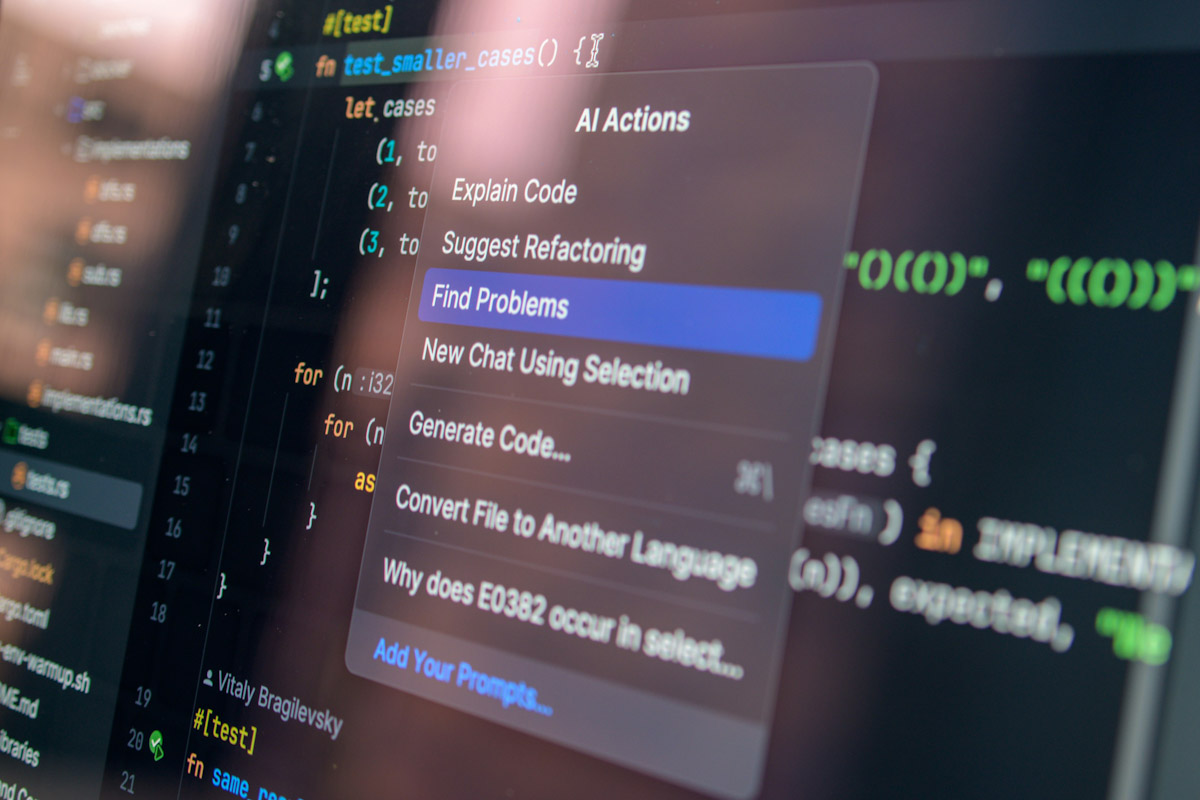

This class of attack has a name: prompt injection. It isn't new as a concept, but its real-world deployment is accelerating at a rapid pace as AI tools become more capable and more deeply connected to systems that give them the ability to take meaningful action on your behalf.

The Logging Problem Nobody Talks About

A standard security incident leaves a trail. Someone authenticates from an unusual location. A file gets accessed outside of business hours. Credentials show up in a breach database. The entire discipline of security monitoring is built around the assumption that there's a log somewhere that captured what happened, and that a skilled analyst can work backward from that log to reconstruct the sequence of events.

Most AI tools don't operate that way.

When your company's AI assistant reads a web page and makes a decision based on what it found there, the default in most deployments is that nobody recorded what it read, what it reasoned through, or what action it ultimately took. The output shows up in the interface. The process that generated it disappears.

That's a meaningful gap, and it's one that tends to surprise people when they think it through carefully. Organizations that have spent years building out security monitoring for employees, endpoints, and email often have almost nothing watching their AI tools. The irony is sharp: AI assistants are granted broad access to systems and data precisely because that breadth is what makes them useful. An AI that can read your email, pull from your internal files, and communicate with external services on your behalf is also an AI that can do real damage if something goes sideways.

The wider the access, the more important the audit trail, and right now most companies have neither.

Why the Vendors Can't Fully Fix This

Walk into any cybersecurity conference this year and you'll get pitched on an AI firewall. Products that claim to detect prompt injection attempts before the AI acts on them. A few of them catch certain attacks in certain conditions, and that's worth something. But the researchers who study this area closely tend to be candid about the limits: the core issue isn't a software bug that can be patched out in a future release. An AI that's designed to read and act on whatever it finds will always carry some susceptibility to malicious instructions embedded in that content.

That's not a flaw in the implementation. It's a tension built into how these systems work.

Detection tooling helps at the margins. What actually moves the needle on accountability is logging. A detailed, queryable record of what the AI read, what it decided, and what actions it took as a result. When something goes wrong, and eventually something will, you want to be able to reconstruct what happened in an afternoon rather than spending days trying to piece it together from incomplete information.

Right now, most companies couldn't do that reconstruction at all. They'd know something went wrong. They wouldn't know why, or when it started, or how far it went.

Three Practical Steps for MSPs

This is a manageable problem if it gets addressed deliberately and before an incident forces the conversation.

Here's where to start.

First, take inventory. Find out which AI tools across your client environments are operating with access to external content, whether that's reading the web, summarizing incoming documents, processing third-party communications, or pulling data from outside sources. A significant number of these tools get purchased by individual teams without going through IT, so the actual list tends to be longer than anyone expects. You can't monitor what you don't know exists.

Second, get an honest answer on logging. For each tool on that list, find out whether there's a retrievable record of what the AI read and what actions it took, for every session. If the answer is no, or if the person responsible for the tool needs time to look into it, that's the gap that deserves the most immediate attention. Every other security control you put around these tools is weaker without it.

Third, review permissions. A lot of AI tools were stood up with broad access because configuring tighter permissions takes time and the pressure was to get the tool running. That calculus made sense when the tools were mostly reading and summarizing. It makes less sense now that many of them can send messages, move files, interact with financial systems, and take other actions with real consequences. An AI that doesn't have permission to initiate a transaction can't be manipulated into initiating one. The more you narrow what these tools can access and act on, the less damage a successful attack can do.

The organizations that handle the next few years of AI adoption without a serious incident will have done a few boring things well: they knew what their AI tools were doing, they kept records of it, and they made sure those tools weren't carrying more access than the job required.

That's not a sophisticated security posture. It's a basic one, applied to a new category of risk that most companies don’t think needs it.